If we continue developing black box AI that can engage in recursive self-improvement, we may eventually realize we’ve been playing too carelessly with Pandora’s box.News Puzzles Games Strategy Twitch Other Resources We should look to and invest in projects that are either naturally explainable or able to pull high-level, human-friendly concepts from high-performance neural networks.Īs artificial narrow intelligence speeds down the path to artificial general intelligence - and then artificial superintelligence - the case for explainable AI grows exponentially as well. Technology is a tool with incredibly positive implications, but, as technologists and designers, we must aim to ensure our products are transparent, accountable, responsible and auditable. Modern AI solutions have already proven themselves capable of causing great social, physical and financial harm. Explainable AI prioritizes transparency and transparency builds trust, which is required to achieve broad adoption of AI solutions. I’m unsure whether explainable AI was a topic of discussion at each organization's respective labs during these programs, but I firmly believe that developers must prioritize explainable AI as we continue innovating at breakneck speeds. But what about systems with life-threatening, long-lasting consequences? How can we trust a recommendation if we can’t understand how it was reached? What if there is innate bias or butterfly effect consequences? How would we even know? In a harmless game of chess, Jeopardy! or Go, it makes sense to choose superior functionality over transparency. It leveraged an endless positive feedback cycle to optimize its strategies until it was ready to challenge - and defeat - Ke Jie, despite Jie playing a near-perfect game (by AlphaGo’s own analysis). Neural networks are hyper-complex, nonlinear models that require advanced network design, recognition capabilities and experiential training.ĪlphaGo played against itself, over and over, filing away each successful and failed attempt into its knowledgebase. Loosely modeled after the human brain, neural-network-based systems use artificial neurons, called nodes, to process information and perform operations. Unlike Deep Blue’s Bayesian structure, AlphaGo was built using artificial neural networks. Then, in 2017, AlphaGo defeated Go world champion Ke Jie. Operating like a search engine with impressive natural language processing (NLP) and reasoning abilities, Watson proved computers could not only master mathematical strategy games, but knowledge- and communication-based games, as well.

#Deep blue chess ibm series#

In 2011, the world watched as IBM’s Watson dominated an exhibition Jeopardy! match with the show’s two most celebrated players, one of whom had won a series of 74 consecutive shows. Deep Blue was an example of explainable AI, so its decisions were transparent and later easily understood by designers.īut technology has progressed dramatically since 1997.

Yet, because of Deep Blue’s Bayesian structure, its programmers could audit the computer’s decision-making afterward and determine, in retrospect, why it had chosen to act a certain way. In order to defeat the greatest chess player in modern history, Deep Blue needed to be able to compute the game map and potential future consequences much more accurately than its programmers - or any human opponent - could. Humans tend to play chess based on pattern recognition and intuition, whereas machines consider billions of possibilities, almost instantaneously, before deciding on the best course of action. Eventually, the computer boasted an impressive library of Bayesian networks, or decision trees, which pulled from probability theory, expected utility maximization and other mathematical systems to suggest the best scenario.īy training the system to make decisions independently, the developers sacrificed their ability to fully predict Deep Blue’s game behavior. During development, engineers provided an opening library of moves, added features, improved computation speed and sparred the system against chess grand masters. The 1997 version was capable of searching between 100 and 200 million positions per second, depending on the type of position, as well as a depth of 20 or more pairs of moves.

#Deep blue chess ibm software#

The software carried out the more basic aspects of the chess computations, while the accelerator chips searched through a tree of possibilities to calculate the best moves.

Deep Blue was essentially a hybrid, a general-purpose supercomputer processor outfitted with chess accelerator chips. Big data was in its infancy, and the hardware couldn’t have supported large networks anyway.

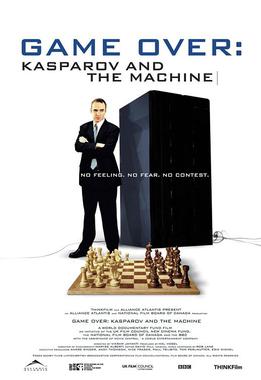

While certainly AI, Deep Blue relied less on machine learning than current systems do. By 1997, Deep Blue was sophisticated enough to defeat Kasparov, the reigning world champion.